blog

|

March 11, 2021

REM™ Gives Our Autonomous Vehicles the HD Maps They Need

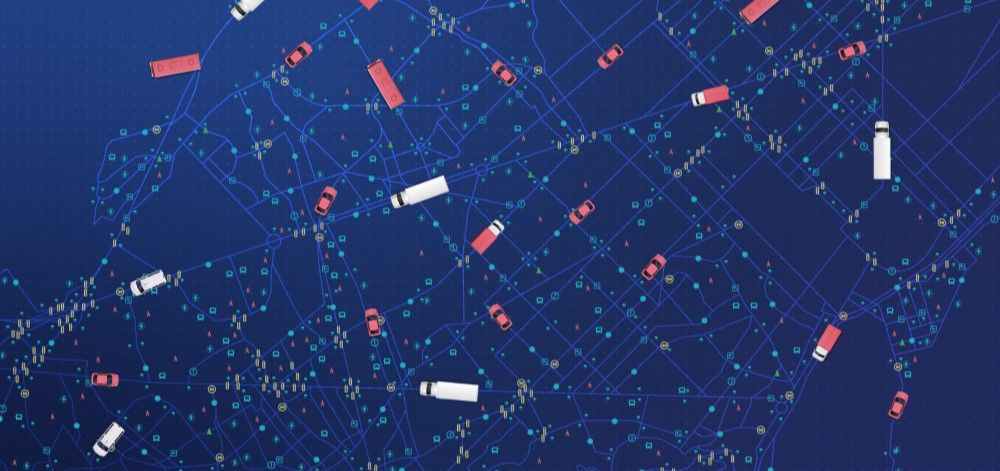

Mobileye’s Road Experience Management™ system leverages our expertise in computer vision and the power of the crowd to supply our autonomous vehicles with an ever-growing and constantly updating database of highly precise, high-definition digital maps.

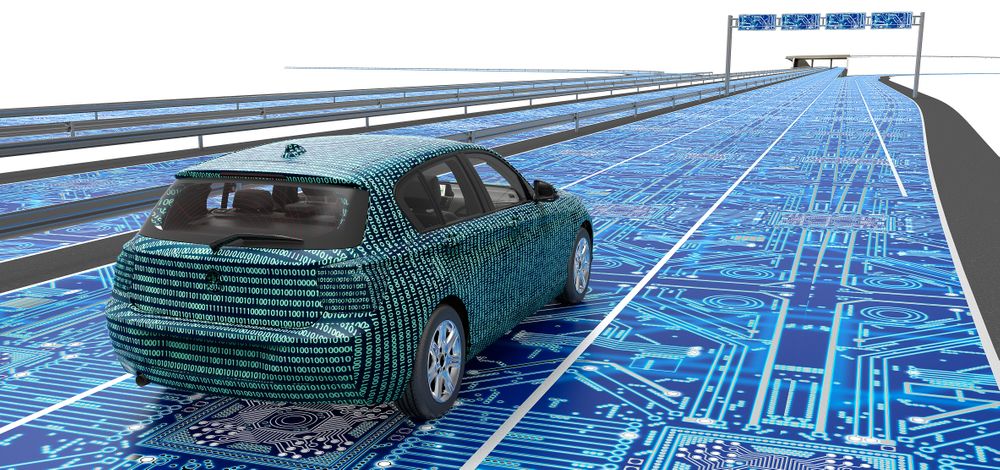

Illustration of a vehicle traveling on a digitized highhway

From memory to environmental cues to asking for directions, human drivers have many ways of figuring out where they’re going – of which consulting a map is just one. But for computer-operated autonomous vehicles, maps will be absolutely essential to supplement the AV’s onboard sensors and increase the self-driving system’s level of reliable safety.

And not just any maps will do: to effectively and safely drive themselves, AVs will require a database of maps far more detailed, precise, and up-to-date than the app on your phone or the satellite navigation system built into your car’s dashboard display (never mind that old road atlas in your glovebox).

That much we determined early on in our research into autonomous vehicles, and the rest of the industry has by now largely come around to the same conclusion. The question is how to best create those maps. Mobileye’s answer is REM™.

Mapping by Convention vs the Mobileye Way

The digital-mapping method commonly practiced across the industry revolves around dispatching fleets of dedicated mapping vehicles. Those vehicles are hugely expensive to acquire and operate, and generate an enormous amount of data to store, transmit, and process. As a result, those (typically LiDAR-intensive) mapping vehicles can only be feasibly deployed on so many sections of roadway at a time, and can only remap those roads so often while the driving environment changes constantly.

By contrast, Mobileye’s Road Experience Management™ (REM) system draws data from the masses of cars already on the road equipped with our cameras and chips. The fully anonymized data is uploaded to the cloud in small packets and processed on a continuous basis to create the Mobileye Roadbook™ – a database of highly precise, high-definition maps by which our AVs will be able to drive in the not-so-distant future.

More than Meets the AV’s Eye

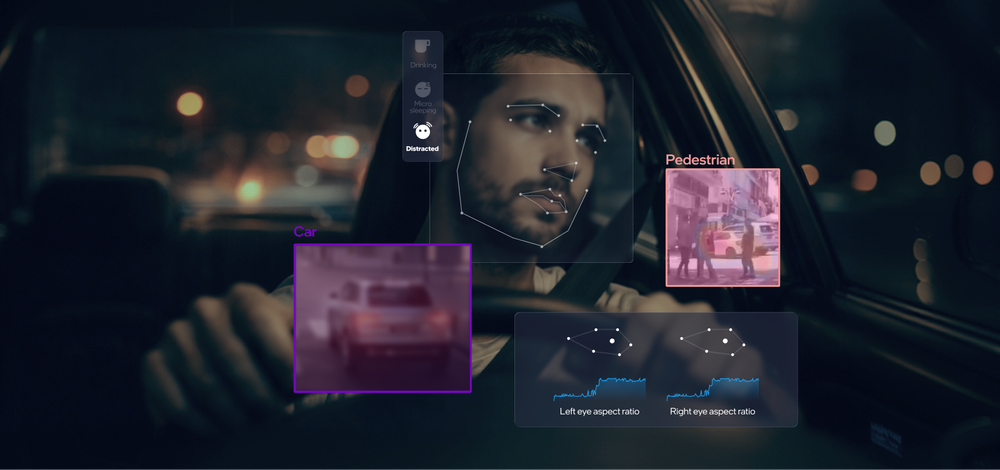

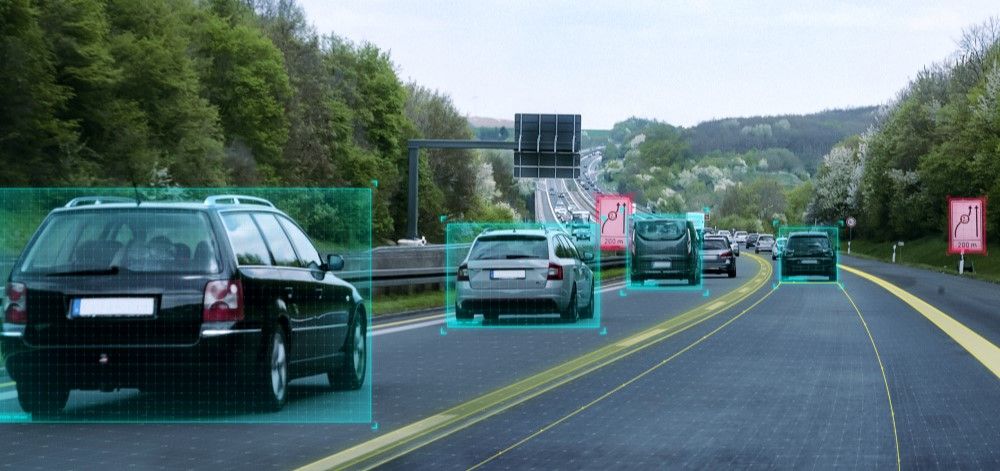

Like today’s advanced driver-assistance systems, autonomous vehicles will rely on sensors onboard the vehicle to perceive the surrounding environment in real time, which in turn serves as the basis for its decisions on how it should operate. But on AVs, the sensors are augmented by the digital map, providing an additional layer of up-to-date information to serve as a point of comparison for what the vehicle should expect to see in front of itself.

REM not only creates that map efficiently, but yields a map with more (and more up-to-date) information. By furnishing our AV with these living, breathing maps, the vehicle will be able to draw on records of how other vehicles drive on the same roadway, localize itself more precisely than a conventional GPS signal affords, and “see” around the next bend and over the next rise – regardless of obstructions, adverse visibility conditions, or other potential impediments to its onboard sensing capabilities.

This crucial development is achieved through an irreplicable confluence of three of Mobileye’s core strengths.

1) Harnessing the Power of the Crowd

To date our technology has been fitted into more than 65 million vehicles, currently offered on hundreds of models from dozens of the world’s leading automakers – including many of those deemed the safest and most advanced. With all those cars traveling the roads we need to map on an ongoing basis, all we need in order to harvest the raw data, in essence, is for even a small proportion of those vehicles to do what they’re already doing and relay what they’re “seeing” back to our network. REM does the rest: creating the Roadbook, constantly refreshing it, and imbuing it with a wide range of relevant parameters.

Among those, REM can track traffic patterns, recognize colors, and read text (like on road signs) along given segments of roadway – regardless of whether those signs were present when the original map was rendered. So a Roadbook-enabled vehicle approaching a construction zone, for example, stands to already know what’s coming up in advance, because other REM-harvesting vehicles will likely have already reported developments like the emergence of orange construction-zone warning signs and the shuffling of traffic out of closed-off lanes – details you wouldn’t get from a conventional static map.

2) Our (Computer) Vision for the Future

Mobileye was founded on computer-vision technology, and that expertise has only grown in the decades since. Our uncommon proficiency in this cutting-edge discipline of artificial intelligence propels the continued advancement of our driver-assistance technologies, our ongoing developing of autonomous vehicles, and the rapid expansion of our mapping and road-surveying initiatives.

In stark contrast to LiDAR, the cameras that serve (both literally and figuratively) as the lens through which we apply our computer-vision technology are incredibly cost-effective – especially in relation to the high resolution and vivid color in which they’re capable of capturing the environment on which they’re trained. But what truly sets Mobileye apart is what we’re able to do with that imagery, and the agility with which our highly specialized and efficient algorithms are able to glean meaningful insights from what our cameras are picking up out on the open road.

3) Light & Lean

The most straightforward method of transmitting what those cameras are detecting might be to transmit the raw imagery or dense 3D representations of the driving environment directly to the cloud. But that would both pose potentially serious privacy concerns, and would also amount to massive amounts of data, which in turn would require incredible bandwidth to transmit.

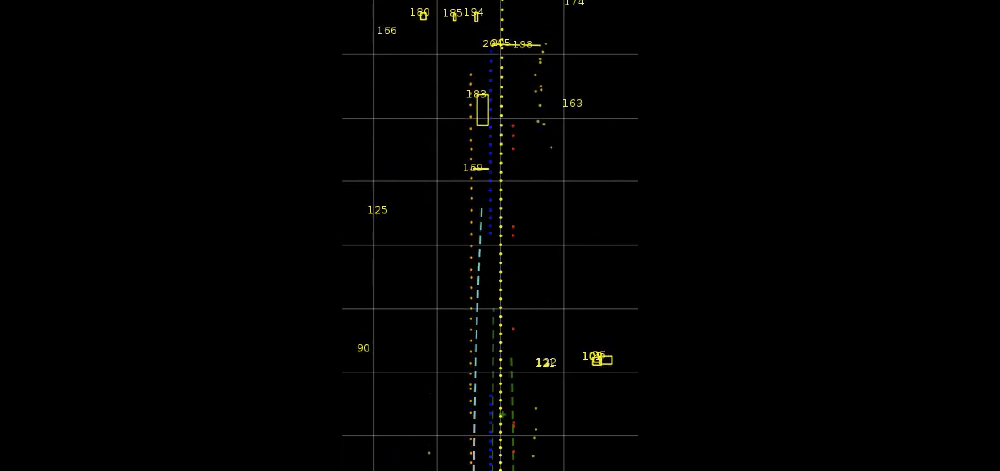

Mobileye’s solution is to process the data at the “edge,” on board the vehicle, and transmit the relevant information in much “lighter” packets of pre-classified and inherently anonymous data. These packets, which we call Road Segment Data (RSD), amount to only 10 kilobytes (roughly the size of this article’s plain text) per kilometer, and can be transmitted over the cellular modems with which new cars already commonly come equipped, requiring only a sparse connection from existing 3G infrastructure, without having to wait for the widespread implementation of the emerging 5G standard.

While these detail-rich RSDs are used first and foremost for creating the map, we also use them for refreshing the map continuously. The parameters in each of these roadway “signatures” are compared automatically to the existing map, and where changes are detected, the Roadbook is updated. This method is not only more efficient than remapping entire sections of roadway from scratch, but results in a database more reliably current and accurate than we’ve determined achievable with LiDAR-equipped mapping vehicles.

Real-World Applications

The convergence in REM of these capabilities is how we were able, for example, to map all of Japan, with the push of a button, in only 24 hours. That entire map, covering some 25,000 kilometers of road, takes up just 400 megabytes, and has already been in use in consumer vehicles from a major domestic automaker for well over a year now. And there are even more applications for REM technology being deployed today before autonomous vehicles will be deployed at scale. Watch this space for more to come.

Share article

Press Contacts

Contact our PR team