blog

|

March 01, 2021

HD Maps vs AV Maps - The Crucial Differences

Powered by REM™, the Mobileye Roadbook™ provides AVs with the accurate, up-to-date information they need to operate effectively and safely.

Mobileye Roadbook™, powered by REM™

An HD map is not the same as an AV map. Compared to the map created by Mobileye specifically for autonomous vehicles, the high-definition maps prevalent across the industry are at once both over-specified and under-specified – offering a lot of information that isn’t necessary, while omitting details that are critical for an AV to navigate within its surroundings.

The common approach taken with most HD maps employed for self-driving applications is to digitize everything on and around the road and locate the AV within that map, offering global accuracy. But the AV doesn’t need to know about a traffic sign, for example, that’s miles away from where it is at any given moment. What it really needs to know is what’s within a radius of approximately 200 meters (about 650 feet) around itself in relation to the vehicle’s current location, and how it needs to operate accordingly.

For all its impressive degree of global accuracy, the typical HD map falls short of offering the AV semantic information – how to understand the best way to navigate the road ahead. Put another way, HD maps don’t offer cues on how to drive – the intuition that human drivers accrue by experience. A conventional HD map can’t tell an AV which traffic light is relevant to the path on which it’s driving, for instance, or where is the best place to stop for an unobstructed view at an intersection (unless such information is entered manually or ported over from an external source). The fundamental question, then, is how to develop a mapping solution that offers the accuracy where it matters, with the information that’s actually relevant to the autonomous vehicle’s operation. Enter: the Mobileye Roadbook™️.

Mobileye Roadbook – Our Autonomous Vehicle Map

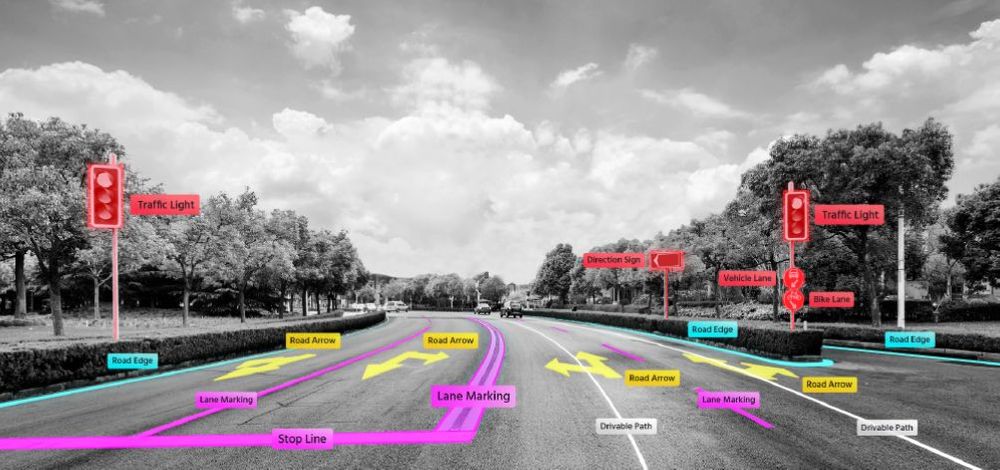

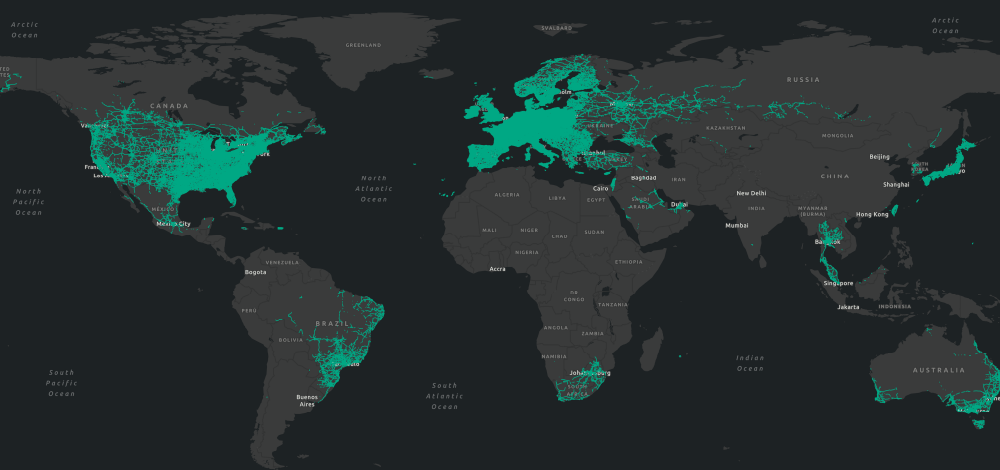

Built specifically for its end use of enabling self-driving vehicles, the Mobileye Roadbook is built on data collected, compiled, aligned, and modeled by REM™ – Mobileye’s technology for mapping the world’s roadways from crowdsourced data. Like most any road map, the Roadbook contains a wealth of relevant information on city streets, rural roads, and interurban highways – all at an exacting level of accuracy to allow for pinpoint localization down to 10 centimeters. But in order to provide our AVs with the precise information they need to inform their decision-making on the move, the Roadbook encompasses a very different set of parameters from the typical database of street names, gas stations, and points of interest.

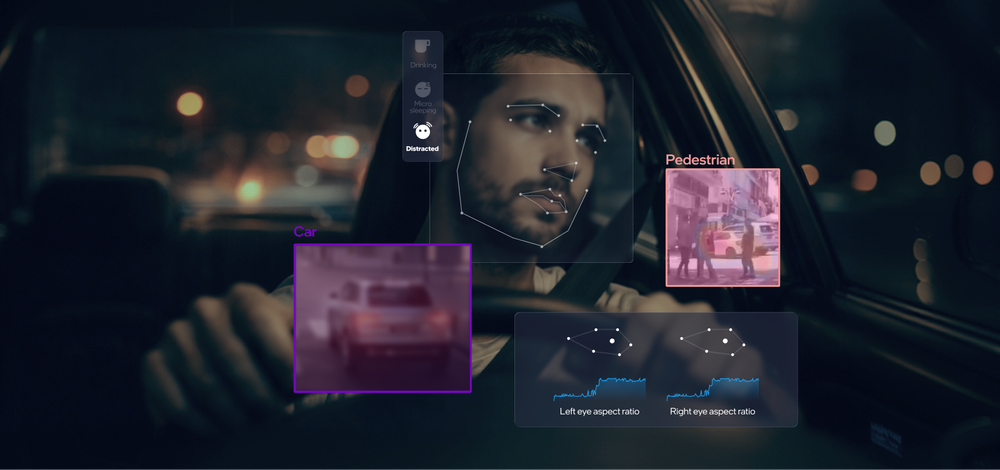

Like most roadway maps, the Mobileye Roadbook includes basic details like curbs, lane markers, and crosswalks. Our map, however, goes a crucial step or two farther – informing the AV not only about the road it needs to drive on, but how it needs to drive on it. Which street sign or traffic light belongs to which lane? Which lane has right-of-way at the intersection? Where do bottlenecks frequently occur in traffic? What speed does traffic commonly travel down any given stretch of road (notwithstanding the posted speed limit)? This type of information might be intuitive to a human driver, but would not inherently be included in a static HD map. The Mobileye Roadbook reflects these real-world parameters, in near-real time, drawn from data uploaded by legions of cars out on the road equipped with our technology.

What the autonomous vehicle doesn’t need to know, on the other hand, the Mobileye Roadbook (quite literally) leaves by the wayside. Because the AV doesn’t need to access photographic images of the driving environment, for example, the Roadbook contains only the distilled and usable points of data that are meaningful to the AV’s operation, and requires less space and bandwidth as a result. The highly specific set of parameters captured by REM yields an AV map that optimizes by design the relationship between level of detail and the digital “weight” of the map. This method provides our AV platform with the precise information it needs – nothing more, nothing less.

Visit the Mobileye REM webpage and watch this space for more.

Share article

Press Contacts

Contact our PR team