opinion

|

September 14, 2023

Are we on the edge of a “ChatGPT moment” for autonomous driving?

Prof. Shai Shalev-Shwartz and Prof. Amnon Shashua discuss the end-to-end approach for solving self-driving vehicle problems, asking if it is both sufficient and necessary.

Prof. Shai Shalev-Shwartz and Prof. Amnon Shashua

Large Language Models (LLMs) such as ChatGPT have revolutionized the world of natural language understanding and generation. Building such models generally has two stages: a general unsupervised pre-training step, and a specific Reinforcement Learning from Human Feedback (RLHF) step. In the pre-training phase, the system “reads” a large portion of the internet (trillions of words) and aims at predicting the next word given previous words, therefore learning the distribution of text over the internet. In the RLHF phase, humans are asked to rank the quality of different answers from the model to given questions, and the neural network is fine-tuned to prefer better answers.

Recently, Tesla has indicated they will adopt this approach for end-to-end solving of the self-driving problem. The premise is to switch from a well-engineered system comprised of data-driven components interconnected by many lines of codes to a pure data-driven approach comprised of a single end-to-end neural network. When examining a technological solution for a given problem, the two questions one should ask is whether the solution is sufficient and whether it is necessary:

- Sufficiency: does this approach tackle all of the requirements of self-driving?

- Necessity: is this the best approach or is it an over-kill (trying to kill a fly with a rocket)?

Some critical requirements of self-driving systems are transparency, controllability and performance:

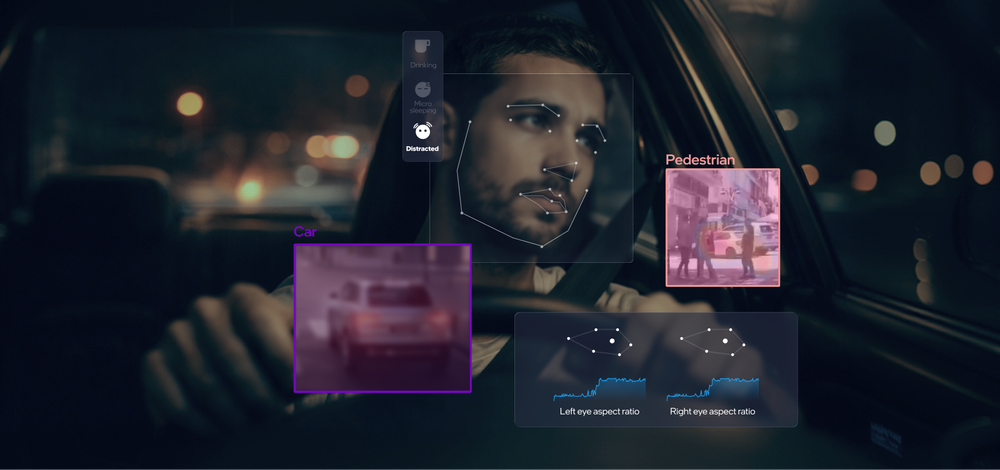

- Transparency and Explainability: Driving systems must perceive and plan. Perception means creating a factual description of reality (the location of lanes, vehicles, pedestrians and so forth). Planning involves leveraging perception into driving decisions that must balance a tradeoff between usefulness and safety. For example, what speed should the car drive at a residential road with parked vehicles on its sides? Driving slower will be safer; say, in case a child runs into the street from between parked cars. But driving too slow will compromise the usefulness of the function and impede other vehicles. We believe how a self-driving vehicle balances such tradeoffs must be transparent so that society, through regulation, should have a say in decisions that affect all road users.

- Controllability: Reproducible system mistakes should be captured and fixed immediately, while not compromising the overall performance of the system. Furthermore, while human drivers take bad decisions from time to time, like not yielding properly or driving while impaired, society will not tolerate “lapses of judgement” of a self-driving system and every decision should be controllable.

- Performance: The Mean-Time-Between-Failures (MTBF) must be extremely high and non-reproducible errors (“black swans”) should be extremely rare.

Now, let us judge the end-to-end solution in light of the above requirements.

For transparency, while it may be possible to steer an end-to-end system towards satisfying some regulatory rules, it is hard to see how to give regulators the option to dictate the exact behavior of the system in all situations. In fact, the most recent trend in LLMs is to combine them with symbolic reasoning elements – also known as good, old fashion coding. See for example Tree of Thoughts, Graph-of-Thoughts and PAL.

For controllability, end-to-end approaches are an engineering nightmare. Evidence shows that the performance of GPT-4 over time deteriorates as a result of attempts to keep improving the system. This can be attributed to phenomena like catastrophic forgetfulness and other artifacts of RLHF. Moreover, there is no way to guarantee “no lapse of judgement” for a fully neuronal system. The trend in LLMs is to combine LLMs with external, code-based, tools in order to have guarantees on elements of the systems (e.g. “calculator” in Toolformer and Jurrasic-x neuro-symbolic system).

Regarding performance (i.e., the high MTBF requirement), while it may be possible that with massive amounts of data and compute an end-to-end approach will converge to a sufficiently high MTBF, the current evidence does not look promising. Even the most advanced LLMs make embarrassing mistakes quite often. Will we trust them for making safety critical decisions? It is well known to machine learning experts that the most difficult problem of statistical methods is the long tail. The end-to-end approach might look very promising to reach a mildly large MTBF (say, of a few hours), but this is orders of magnitude smaller than the requirement for safe deployment of a self-driving vehicle, and each increase of the MTBF by one order of magnitude becomes harder and harder. It is not surprising that the recent live demonstration of Tesla’s latest FSD by Elon Musk shows an MTBF of roughly one hour.

Taken together, we see many concerns regarding the ability of an end-to-end approach to fully tackle the self-driving challenge. What we would further argue is that an end-to-end approach is an over-kill. The premise of a fully end-to-end approach is “no lines of code, everything should be done by a single gigantic neural network.” Such a system requires maintaining a huge model, with every single update carefully balanced - yet this approach goes against current trends in utilizing LLMs as components within real systems. One such trend is the neural-symbolic approach, in which the fully neuronal LLM is one component within a larger system that uses code-based tools (for example Toolformer). Another trend is the expert approach, in which LLMs are fine tuned to specific, well defined tasks; and the evidence so far is that small dedicated models outperform significantly larger models (e.g. the code LLaMa project). These trends have implications on the data and compute requirements, showing that quality of data, architecture, and system design may be far more important than sheer quantity.

In summary, we argue that an end-to-end approach is neither necessary nor sufficient for self-driving systems. There is no argument that data-driven methods including convolutional networks and transformers are crucial elements of self-driving systems, however, they must be carefully embedded within a well-engineered architecture.

Share article

Press Contacts

Contact our PR team